Allio Capital Team

The Macroscope

Why most wealth platforms will try to build—and why many will regret it

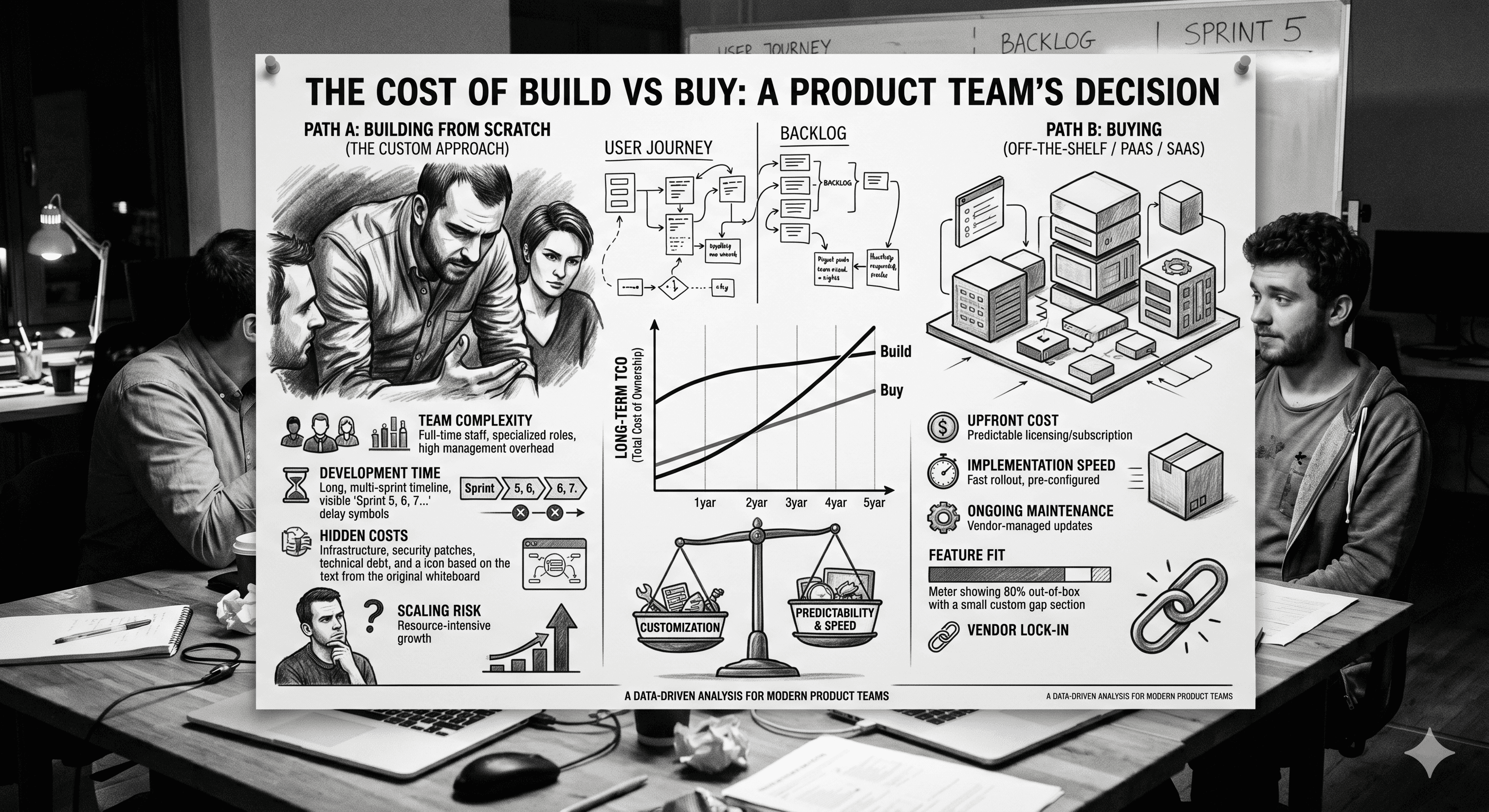

Over the next 12–24 months, nearly every fintech and wealth management platform will face the same strategic question:

Should we build our own AI layer, or should we integrate one?

At first glance, the answer seems obvious. AI is core to the future of financial products. It touches the user experience, portfolio intelligence, client engagement, and ultimately revenue growth. Why wouldn’t a platform want to own something that important?

So they decide to build.

They allocate internal resources. They spin up a small team. They begin experimenting with large language models, prompts, and data pipelines. Progress is made. Early demos look promising.

And then reality sets in.

Because what initially looked like a feature quickly reveals itself to be something much larger:

Not a feature. Not even a product.

An infrastructure layer.

The Misconception: “We’re Just Adding AI”

Most product teams approach AI with a feature mindset. They imagine adding a conversational interface, generating insights, or enhancing existing dashboards with smarter outputs.

But in wealth management, AI doesn’t sit neatly on top of the product. It has to sit within it.

To deliver meaningful value, an AI system needs to understand:

portfolio construction logic

asset-level exposures

macroeconomic relationships

client-specific context

and the implications of change over time

It needs to generate outputs that are not only accurate, but also explainable and appropriate within a financial context.

And it needs to do all of this in real time, in response to user input, within the constraints of a regulated environment.

That’s not a feature. That’s a system.

What “Building AI” Actually Requires

Once teams move beyond prototypes, the scope expands quickly.

They need a way to ingest and normalize portfolio data across accounts and asset classes. They need an analytics engine capable of interpreting that data in a meaningful way. They need to connect that engine to large language models, orchestrating prompts and responses so that outputs are consistent, relevant, and grounded in actual portfolio behavior.

Then comes the interface layer, where natural language inputs are translated into structured queries, and structured outputs are turned back into coherent, user-friendly explanations.

And surrounding all of this is the need for monitoring, iteration, and refinement. AI systems are not static. They require constant tuning to maintain quality and relevance.

What started as an experiment becomes an ongoing commitment.

The Hidden Costs of Building In-House

The most obvious cost is time. Even well-resourced teams can spend months, if not years, moving from initial concept to production-ready system.

But the more significant cost is opportunity.

While internal teams are focused on building foundational AI capabilities, they are not focusing on differentiation. They are not improving their core product. They are not shipping features that directly impact growth.

They are, in effect, rebuilding infrastructure that is rapidly becoming standardized.

There is also the risk of partial solutions. Many internally built systems never fully reach the level of sophistication required to deliver consistent value. They remain stuck in a state of “almost there,” where the promise of AI is visible, but the experience falls short.

From a user perspective, this can be worse than not having the feature at all.

The Speed Problem

In fast-moving markets, timing matters as much as capability.

The shift toward AI-driven interaction in fintech is not theoretical. It is happening now. Users are already being conditioned to expect conversational interfaces and personalized insights. Competitors are beginning to experiment, and in some cases, to deploy.

This creates a narrowing window.

The firms that move early have the opportunity to define the standard. They shape user expectations and capture mindshare. Those that move later are forced into a reactive position, trying to catch up to an experience that users have already internalized.

Building internally, in this context, is not just a technical decision. It is a strategic bet on how quickly you can execute relative to the market.

The Case for Buying (or Integrating)

Against this backdrop, a different approach is gaining traction.

Instead of building an AI layer from scratch, firms are integrating modular, API-driven solutions that are specifically designed for financial use cases. These systems provide the underlying intelligence layer—portfolio analysis, natural language interaction, and insight generation—while allowing the platform to retain control over the user experience.

This approach changes the equation in several ways.

First, it dramatically reduces time to market. What might take years to build can be implemented in months or even weeks, depending on the level of integration.

Second, it shifts internal focus back to differentiation. Instead of allocating resources to foundational infrastructure, teams can concentrate on how AI is presented, how it fits into the broader product, and how it enhances the overall user journey.

Third, it reduces risk. Proven systems, built specifically for the domain, are less likely to produce inconsistent or unreliable outputs. They come with the benefit of prior iteration and real-world validation.

In effect, buying or integrating allows firms to leapfrog the most challenging part of the build process.

The Strategic Lens: Build vs. Buy Is Really Build vs. Compete

At a deeper level, this decision is not just about technology.

It’s about competition.

If AI becomes the primary interface through which users engage with their portfolios—and all signs suggest that it will—then the quality of that interface becomes a key competitive differentiator.

The question then becomes:

Do you want to compete on infrastructure, or do you want to compete on experience?

Building internally means competing on both, which is significantly harder. Integrating an existing solution allows you to treat the AI layer as infrastructure and focus your competitive energy on how it is used.

This is the same pattern that has played out across other areas of technology. Very few companies build their own cloud infrastructure. Very few build their own payment rails. As markets mature, certain capabilities become standardized, and value shifts to the layers above.

AI in wealth management appears to be following a similar trajectory.

Where This Leaves the Market

In the near term, most fintech firms will attempt some form of internal build. It’s a natural instinct, driven by the desire for control and differentiation.

But over time, the market will likely bifurcate.

A small number of firms, with significant resources and deep technical expertise, will succeed in building robust internal systems. They will invest heavily and treat AI as a core competency.

The majority, however, will realize that the cost, complexity, and time required to build at that level are prohibitive. They will look for alternatives that allow them to achieve similar outcomes without the same level of investment.

This is where integration and acquisition become increasingly attractive.

The Window of Opportunity

For firms evaluating this decision today, there is a window of opportunity.

AI in wealth management is still early enough that the playing field has not fully settled. There is still time to move decisively, to adopt the right approach, and to position the product ahead of competitors.

But that window will not remain open indefinitely.

As more platforms adopt AI layers—whether built or bought—the baseline expectation will rise. What is currently differentiated will become standard. At that point, the focus will shift again, and late adopters will find themselves perpetually behind.

Final Thought

Every major shift in technology forces a version of this decision.

Do you build the underlying capability yourself, or do you adopt an existing solution and focus on what sits on top?

In fintech, the answer has often depended on the layer in question. Core differentiators are built. Commodity infrastructure is bought.

The challenge with AI is that it feels like both.

But as the market evolves, one thing is becoming clear:

The firms that win will not be the ones that simply have AI.

They will be the ones that deliver it to users in the most effective, intuitive, and immediate way.

And increasingly, that may not require building it at all.